AI Guardrails and Content Compliance

AI guardrails for your content standards.

The most comprehensive suite of content governance solutions to make your AI-generated writing compliant with your standards.

Trusted by the companies who care the most about writing standards

What are AI guardrails

In technology, AI guardrails are used to regulate generative AI, to make it comply with laws and standards and prevent harm. AI guardrails for writing standards make sure that all your AI-generated content is true to your brand and your writing standards. These types of AI guardrails are closely related to content governance:

AI guardrails for writing standards are content governance for AI-generated content in action.

How Acrolinx sets AI guardrails through content governance

Regardless of how you’re deploying generative AI in your organization, Acrolinx’s automated content governance and content quality assurance for writers mesh with your technology stack to ensure the quality of:

LLM fine-tuning content

LLM generated content

Content QA for writers

Content in development

Published content

Acrolinx’s AI guardrails lead to high-quality content compliance

Quality assure content used for fine-tuning

Quality content in means quality content out. With content quality assurance, you make sure the content your business uses to fine-tune your LLMs meets enterprise standards. This drastically improves your model’s output.

Quality assure generated LLM completions

Check the quality of the content your LLM generates — before your writers even see it. Acrolinx integrates into your generative AI workflows to check, score, and improve content as it’s generated. This makes sure content follows enterprise style guides and writing standards.

Content QA for writers

Provide writers with editorial guidance directly within the applications they use every day. Use our sidebar to instantly check human and AI-generated content to maintain content quality and enterprise writing standards.

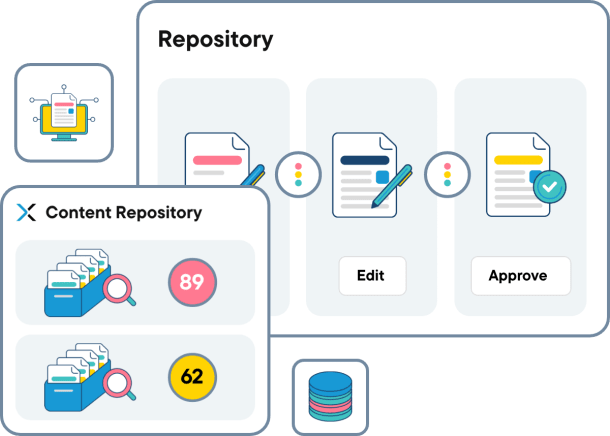

Automated quality gates

Integrate Acrolinx into your content workflows to check and score the quality of content automatically before it’s published. This makes sure only high-quality content is published while low-quality content is held for editorial review.

Maintain the quality of published content

Writing standards change, be it due to regulation changes, new product releases, mergers, acquisitions, or some other event. Acrolinx continually checks the quality of published content and identifies content that no longer meets enterprise writing standards. This saves you the painstaking effort of having to review content manually.

AI guardrails for content help prevent abuse and harmful content

Our relationship with Microsoft Azure OpenAI provides you with built-in LLM content filtering. For you, this means your LLM technology blocks the generation of offensive, abusive, hateful, and explicit content.

Harm

Blocking the generation of self-inflicted injury, illegal substances/abuse in content.

Hate

Blocking the generation of distasteful and disrespectful content.

Explicit

Blocking the generation of sexually suggestive and offensive content.

Violence

Blocking the generation of graphic depictions of assault and battery in content.

Love a good read?

Discover our newest resources for content enthusiasts.

Keep Content on Track With AI Guardrails for Content Standards

Learn how to fine-tune your LLM withquality assured content and use AI guardrails to protect your brand and content standards.

Siemens Healthineers

How MAN Improved Product Documentation with Acrolinx

What our customers say

Learn how AI guardrails support content creation

Book a call to learn how Acrolinx AI guardrails for content support content creation through Acrolinx and improve safe use of your private LLMs.