Best-in-Class Enterprise LLM

Boost your writing with leading enterprise LLM technology.

Experience superior flexibility and security with our AI infrastructure powered by Azure. Acrolinx keeps your private and sensitive information confidential. User data and activity is never shared with public models or used to train external AI models.

Trusted by the companies who care the most about writing standards

Your secure enterprise LLM

There are multiple ways to use LLMs (large language models) as an enterprise. The Acrolinx approach provides both content governance and enterprise-grade security. Using Acrolinx, you have a secure LLM powered by Microsoft Azure AI that enables writers to fix and generate content that is aligned to your corporate style guide.

The premier AI text enhancer for your writers

Get writing suggestions

Acrolinx provides real-time suggestions to improve your writing. Suggestions are influenced by your style guide to provide efficient content improvements that meet your enterprise writing standards.

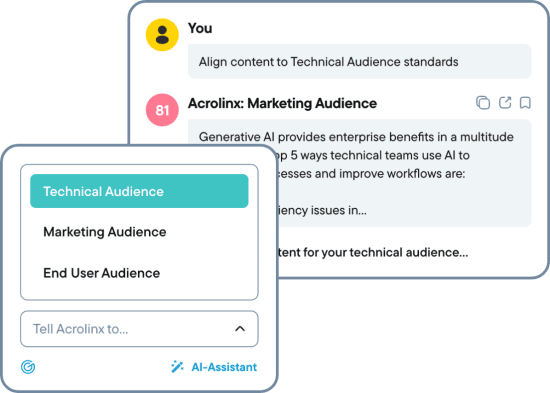

Leverage the AI Assistant

Generate brand-new content that’s compliant, on-brand, and resonates with your audience. This way, you’re able to overcome editorial bottlenecks and scale content production to meet your business goals.

Best-in-class LLM infrastructure

Acrolinx customers benefit from the best in LLM technologies. By partnering with Microsoft Azure AI, you’re not locked into a single proprietary model, which means as the language technology industry evolves – so will you. At the same time, your data is yours alone and isn’t shared with a public model. This way, you can use generative AI without the fear of breaching your enterprise’s security guidelines.

Long story short, Acrolinx offers you complete peace of mind:

Confidential Data

Without fear it will leak outside of your organization.

Secure Infrastructure

So your data won’t be processed by public systems.

Data Ownership

Your data won’t be used to train external AI models.

Future Proof

Designed to integrate with new approved LLMs.

Content efficiency with improved compliance

Using your private enterprise LLM by Acrolinx not only helps create amazing content efficiently. It makes sure your teams generate content based on approved content, limiting compliance risks. Additionally, harmful content is blocked. All this in a secure and yet future-proof LLM infrastructure.

Content safety and responsible guardrails

Our partnership with Microsoft Azure provides you with built-in LLM security guardrails. For you, this means: Your LLM technology blocks the generation of offensive content.

Harm

Blocking the generation of self-inflicted injury, illegal substances/abuse in content.

Hate

Blocking the generation of distasteful and disrespectful content.

Explicit

Blocking the generation of sexually suggestive and offensive content.

Violence

Blocking the generation of graphic depictions of assault and battery in content.

Love a good read?

Discover our newest resources for content enthusiasts.

How To Prepare Your Content for an LLM

Produce Better Content with LLM Guardrails If you’re deploying an LLM, you need to…

Siemens Healthineers

How MAN Improved Product Documentation with Acrolinx

What our customers say

Learn more about our enterprise ready technology

See how our AI capabilities help you create and maintain high-quality content.